How Motion Capture Works in Game Development

- Mimic Productions

- Mar 20

- 10 min read

Updated: Apr 10

Motion Capture in Game Development is not a shortcut around animation. It is a performance pipeline that turns live movement into structured character data, then refines that data for gameplay, cinematics, and real time interaction. When it is handled well, motion capture gives game characters physical credibility, emotional timing, and a sense of weight that is difficult to build from keyframes alone.

In modern production, a capture session is only one part of the process. The real value comes from how well the recorded movement passes through solving, cleanup, retargeting, rigging, engine integration, and final polish. A game studio may capture a stunt performer for traversal, an actor for dialogue scenes, or a full body and face performance for a hero character, but none of that reaches the player intact without a strong technical pipeline.

For developers, directors, and production teams, the important question is not whether mocap is used. It is how the captured performance is translated into something responsive, stable, and believable inside a game engine. That is where game development places different demands on the process than film.

Table of Contents

What Motion Capture Means in a Game Pipeline

At its core, Motion Capture in Game Development records human movement and converts it into animation data. Depending on the system, that may come from optical cameras tracking markers, inertial suits using sensors, or hybrid setups that combine body, hands, and face. The capture itself is only raw input. It still needs interpretation.

In a game pipeline, that interpretation matters because the final character is not simply replaying a recorded scene. The animation must blend with locomotion systems, state machines, inverse kinematics, gameplay triggers, camera logic, and player input. A sprint, vault, recoil, or combat turn must feel authored, but it must also remain flexible enough to respond to the game state.

That is why studios working on action titles, sports games, RPGs, and narrative experiences often combine live performance with technical animation systems. The capture gives the movement authenticity. The pipeline makes it usable.

Teams exploring dedicated capture workflows often begin with a broader production view of motion capture services, especially when the goal is to support both cinematic scenes and gameplay animation within one unified process.

How the Workflow Begins on Set

The first stage is preproduction. Before anyone steps into a suit, the team defines what kind of movement they need, how the characters are rigged, and where the data will be used. A combat game may require clean attack cycles, hit reactions, and traversal clips. A narrative title may need subtle body language, performance timing, and facial nuance. A creature project may require a different calibration strategy entirely.

Once the brief is clear, performers are prepared for the capture volume. In an optical setup, markers are placed across the body so the camera array can identify limb position through time. In inertial workflows, sensors track motion without the same dependence on line of sight. Each system has strengths. Optical setups are often preferred when precision matters, while inertial systems can be useful for mobility and faster deployment.

Calibration is critical. The performer must be mapped accurately so the system understands proportions, joint orientation, and movement range. If the calibration is poor, the downstream animation will inherit that error. Small misalignments at this stage can create hours of cleanup later.

For game projects, the capture session is usually planned around reusable motion sets. Instead of recording one linear scene, teams capture modular performance blocks. These might include idles, turns, starts, stops, jumps, climbs, interactions, and combat transitions. The intention is not just to record movement, but to build a library that can be assembled dynamically in the engine.

Studios developing character focused titles often connect capture planning with broader game production workflows, because the requirements of gameplay, camera distance, character class, and engine performance all shape what should be recorded in the first place.

Solving and Cleanup After Capture

Raw capture data is rarely production ready. Once the recording session ends, the next stage is solving. This is where the software interprets marker or sensor data and reconstructs a digital skeleton moving through space. The solve turns a cloud of tracked information into animation that can be edited.

After solving comes cleanup. This is where technical artists and animators remove jitter, fix foot sliding, correct joint pops, stabilize contacts, and repair occlusion errors. If a marker disappeared behind a prop or another performer, that missing information has to be reconstructed. If the timing of a movement is correct but the silhouette reads poorly on the character, the animation may need refinement.

This stage is where craft becomes visible. Good cleanup preserves the energy of the actor while removing the noise of the recording process. Overprocessed animation can feel sterile. Underprocessed animation can feel unstable. The goal is controlled naturalism.

For games, cleanup must also account for function. A beautifully captured run cycle is not useful if it breaks during directional blending. A convincing attack is not usable if the contact frame does not line up with gameplay logic. Animation teams constantly balance fidelity against responsiveness.

Retargeting Performance to a Game Ready Character

Once the movement is clean, it has to be transferred to the final character. This is known as retargeting. The performer and the game character rarely share identical proportions, so the motion needs translation. A tall hero, a stocky fighter, and a stylized fantasy creature will all interpret the same body data differently.

This is where rig quality matters. The better the skeleton, control rig, deformation system, and weighting, the more reliably the captured movement can be applied. A weak rig introduces instability. A strong rig protects the performance while allowing the team to push poses where needed.

In game development, retargeting is also shaped by runtime constraints. The animation cannot simply look correct in a DCC package. It must survive import into Unreal, Unity, or a proprietary engine, where compression, blending, state transitions, and procedural adjustments will affect the final result.

That is why character movement often depends on close coordination between capture, rigging, and runtime implementation. Teams building expressive characters for interactive environments usually rely on robust body and facial rigging so the performance remains stable from cleanup through final engine deployment.

How Gameplay Changes Animation Requirements

Film can commit to a fixed camera, fixed timing, and a locked performance. Games cannot. The player can interrupt, redirect, cancel, combine, or repeat actions endlessly. That changes everything about how motion data is prepared.

In Motion Capture in Game Development, animation must be modular. A single movement often exists as part of a system rather than a single clip. Walks need directional variants. Stops need entry and exit compatibility. Combat actions need anticipation, strike, recovery, and cancel windows. Traversal needs contact clarity so the body appears to interact with the world correctly.

This is why game animation often combines captured movement with procedural tools such as inverse kinematics, additive layers, aim offsets, motion matching, or physics based corrections. The live performance gives the base truth. The engine gives the system adaptability.

The difference becomes especially clear when comparing narrative scenes with player controlled movement. In a cutscene, the studio can preserve more of the original actor timing. In gameplay, timing often has to be reshaped around responsiveness and input readability. The craft lies in deciding what must stay human and what must become systemic.

This transition from captured performance to playable animation is closely tied to real time integration, where the character rig, animation graph, and engine logic determine whether movement feels immediate, believable, and technically stable.

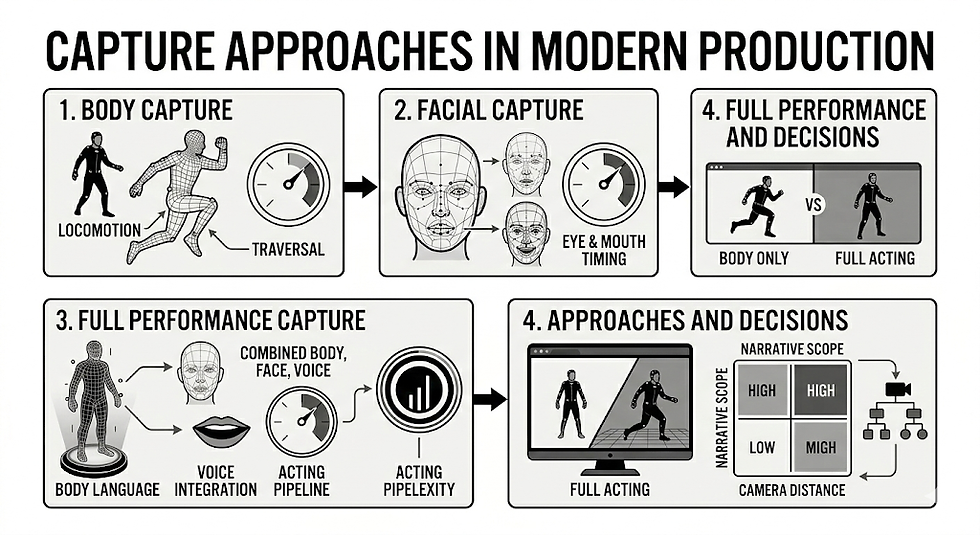

Body Capture, Facial Capture, and Full Performance Capture

Not every game uses the same level of capture. Some projects need only full body motion. Others require facial solving, finger tracking, and detailed dialogue performance. The choice depends on genre, camera distance, production scope, and narrative ambition.

Body capture is often enough for locomotion, combat, sports, and traversal systems. It delivers grounded weight shifts, natural acceleration, and convincing physical rhythm. For many gameplay systems, this is the foundation.

Facial capture becomes more important when the camera moves closer to the character. Dialogue heavy games, performance driven cutscenes, and emotionally specific scenes benefit from facial data because subtle timing around eyes, mouth, brows, and asymmetry adds intent that is difficult to fake at scale.

Full performance capture combines body, face, and often voice timing into one coherent acting event. This is especially valuable when the studio wants body language and facial emotion to feel inseparable. It also reduces the risk of creating a technically correct scene that feels emotionally disconnected.

For teams deciding between these approaches, the distinction between raw body tracking and a more complete acting pipeline is often best understood through motion capture versus performance capture, since the production demands are related but not identical.

Comparison Table

Aspect | Motion capture for gameplay | Motion capture for cinematics |

Primary goal | Responsive movement that supports player control | Directed performance with fixed timing |

Clip design | Modular and blend ready | Scene specific and continuity driven |

Cleanup focus | Foot locks, transitions, gameplay readability | Silhouette, acting nuance, shot continuity |

Retargeting pressure | Must survive runtime systems and animation graphs | Can be optimized for a controlled sequence |

Procedural support | Often extensive | Usually limited and shot dependent |

Player interaction | Constant and unpredictable | Minimal or none |

Performance priority | Function and responsiveness | Acting precision and presentation |

Applications in Game Development

Motion Capture in Game Development is used across far more than walking and running. In sports titles, it helps preserve authentic athletic mechanics and sport specific posture. In fighting games, it creates sharper physical intent, especially when capture is combined with hand animation and cleanup for impact clarity. In action adventure titles, it supports climbing, takedowns, weapon handling, and environmental interaction.

Narrative games use performance data to shape character presence. A pause before speaking, a guarded stance, or the slight collapse of posture after a difficult scene can carry as much meaning as dialogue. When paired with facial solving and carefully rigged characters, live performance gives digital actors a more convincing psychological center.

Open world games use captured libraries for scale. Large animation sets can be built from repeated sessions, then adapted across NPCs, enemies, civilians, and hero characters. Even when not every final animation is directly captured, mocap often serves as the physical reference that keeps the movement language coherent across the project.

Stylized projects also benefit. Capture does not have to produce realism in a narrow sense. It can be exaggerated, edited, and reinterpreted. What matters is that the performance begins with physically coherent motion, then gets shaped to match the visual direction of the game.

Benefits

The clearest benefit of Motion Capture in Game Development is physical credibility. Human movement carries tiny patterns of balance, hesitation, and momentum that audiences recognize immediately. Even when the player cannot describe why an animation feels right, they respond to these patterns.

Another benefit is speed at scale, but only when the downstream pipeline is strong. A well planned session can generate a large body of useful movement quickly. That said, capture is never a replacement for animation craft. It shifts effort from inventing every motion by hand toward directing performances, processing data, and integrating it intelligently.

Mocap also helps teams unify gameplay and cinematic language. If the same character appears in combat, traversal, dialogue, and scripted scenes, capture can help maintain consistency in movement identity across those contexts. The character feels like one being rather than a collection of unrelated animation solutions.

For directors and narrative teams, performance capture provides another advantage. It lets acting choices appear in the body before they are verbalized. That often creates a stronger emotional read than dialogue alone.

Future Outlook

The future of Motion Capture in Game Development is not simply more capture. It is better integration. Studios are moving toward pipelines where body data, facial solving, runtime retargeting, procedural adaptation, and machine assisted cleanup work together more fluidly.

Real time preview is already changing production decisions. Directors can evaluate movement earlier. Technical teams can test engine behavior sooner. Character issues can be spotted before the data reaches final implementation. This shortens iteration loops and reduces the gap between stage performance and playable result.

At the same time, expectations for character fidelity continue to rise. Players increasingly notice the relationship between body motion, facial expression, cloth behavior, and camera framing. The strongest pipelines will be the ones that connect scanning, rigging, animation, rendering, and engine deployment into one coherent character system rather than treating each step as a separate department problem.

As game worlds become more responsive and character driven, the value of capture will remain tied to craft. The studios that stand out will not be the ones recording the most movement. They will be the ones translating performance into interaction without losing human intention.

FAQs

What is motion capture in game development?

It is the process of recording live human movement and converting it into animation data for digital characters in a game. That data is then solved, cleaned, retargeted, and integrated into gameplay or cinematic systems.

Is motion capture better than keyframe animation?

Not inherently. Mocap and keyframe animation solve different problems. Capture is excellent for grounded movement and performance authenticity. Keyframe work is often better for stylization, exaggerated motion, creatures, or sequences that need heavy artistic control. Most advanced pipelines use both.

How does mocap differ from performance capture?

Mocap usually refers to tracked body movement. Performance capture generally includes body, facial expression, and often synchronized acting intent across the full performance. The distinction matters most in narrative work and close camera storytelling.

Why does motion capture still need animators?

Because raw capture is not final animation. Animators and technical artists solve tracking issues, shape silhouettes, improve readability, support gameplay systems, and preserve consistency across the full character pipeline.

Can indie studios use motion capture?

Yes, but the success of the result depends less on budget than on pipeline discipline. Even small teams can use capture effectively when they plan for cleanup, retargeting, and engine implementation from the start.

Does mocap only work for realistic games?

No. It is useful in realistic, stylized, and hybrid projects. Captured movement can be edited, exaggerated, and reinterpreted to fit a wide range of art directions.

Conclusion

Motion Capture in Game Development works because it begins with human performance but does not end there. The captured body is only the first layer. What follows is a chain of technical and creative decisions involving solving, cleanup, retargeting, rigging, and engine integration. That chain determines whether the movement feels alive in the hands of a player.

For game studios, the goal is not simply to record action. It is to build a character pipeline where live performance survives the transition into an interactive system. When that happens, the result is not just realistic animation. It is movement that feels authored, embodied, and playable.

For further information and in case of queries please contact Press department Mimic Productions: info@mimicproductions.com

.png)

Comments