Difference Between Mixed Reality and Extended Reality

- Mimic Productions

- 4 days ago

- 9 min read

What actually separates Mixed Reality from Extended Reality, and why does the distinction matter when you are designing an immersive experience, a digital human workflow, or a real time production pipeline?

The terms are often used as if they mean the same thing. In practice, they do not. Mixed Reality vs Extended Reality is not just a naming issue. It affects how teams scope hardware, interaction design, tracking systems, content production, and deployment.

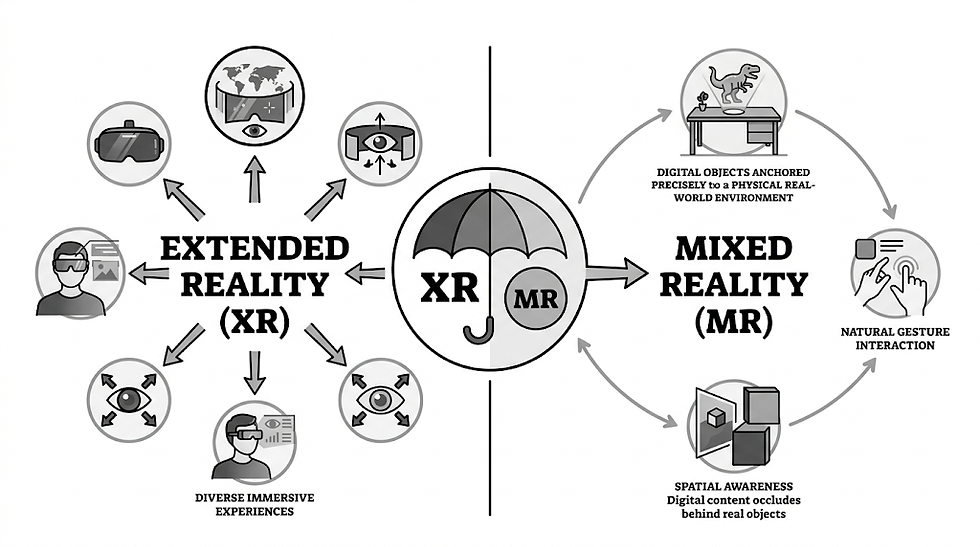

Extended Reality is the wider category. It includes virtual reality, augmented reality, and mixed reality as part of one broader spatial computing landscape. Mixed Reality is more specific. It describes experiences where digital content is anchored to and responsive within the physical world, often with persistent spatial awareness, depth understanding, and interaction between real and virtual elements.

For studios, brands, and technical teams, that distinction changes the production brief. A virtual showroom, an industrial training tool, a holographic performance, and a character driven immersive activation may all sit under the XR umbrella, but they do not require the same capture pipeline, asset fidelity, or interaction logic. A photoreal character prepared for XR experiences must be built differently from a lightweight asset intended for mobile AR, and differently again from a full scene designed for head mounted mixed reality deployment.

This article breaks down the practical difference between these two concepts, where they overlap, and how to decide which approach fits a production goal.

Table of Contents

What Extended Reality Actually Means

Extended Reality is the umbrella term for immersive technologies that alter, replace, or blend a user’s view of the physical world. It is the broad ecosystem that contains virtual reality, augmented reality, and mixed reality.

Think of XR as the full map rather than one destination. It covers experiences such as:

Fully synthetic environments viewed in a headset

Mobile overlays placed on top of a camera feed

Spatial interfaces that understand a room and allow digital objects to coexist with physical space

Real time character experiences that combine AI, animation, and embodied interaction

Because XR is an umbrella term, it is useful at the strategy level. It helps stakeholders talk about immersive systems without locking the conversation too early into one format. That matters when a concept may evolve from a headset based prototype into a live event installation, or from a marketing activation into a persistent digital human platform.

For production teams, XR usually signals a broader workflow conversation. You are not just choosing visuals. You are choosing hardware constraints, latency targets, scene optimization, input methods, and the level of realism required from environments, characters, and performance systems. In this context, a studio with experience in immersive production can help define whether the experience should remain format agnostic at the concept stage or move quickly into a specific deployment path.

What Mixed Reality Actually Means

Mixed Reality is a subset of XR. It refers to experiences where physical and digital elements exist together and can influence one another in a spatially aware way.

That sounds simple, but the production implications are substantial.

In mixed reality, a digital object is not merely displayed over the world. It is aware of the world. It can appear behind a real table, remain anchored to a wall, react to depth, or support interaction that feels spatially consistent. The system needs some understanding of the environment, whether through room mapping, depth sensing, hand tracking, controller input, or surface detection.

This is why mixed reality tends to demand more from both hardware and content. Assets need believable scale.

Lighting must feel coherent. Animation must hold up when viewed from changing distances and angles. Interaction design cannot rely only on screen based assumptions. Even a simple avatar briefing in MR becomes more complex when eye line, gesture readability, proximity, and environmental occlusion all affect the result.

If that experience includes a human likeness, the challenge deepens. Accurate facial topology, deformation quality, and performant rigging all matter more when the viewer can move around the character and inspect it in real space. That is where services such as body and facial rigging become central rather than optional.

Mixed Reality vs Extended Reality in Plain Terms

The clearest way to understand Mixed Reality vs Extended Reality is this:

Extended Reality is the category. Mixed Reality is one method inside that category.

XR answers the question: What family of immersive technologies are we talking about?

MR answers the question: How exactly does digital content behave in relation to the physical world?

A few examples make it clearer.

A fully virtual training simulation in a headset is XR, but not mixed reality.

A phone based lens that places a cosmetic filter over a face is XR in the broad sense, often treated as AR, but not necessarily mixed reality.

A headset experience where a digital assistant stands on the real floor, responds to hand input, and remains spatially consistent as the user walks around the room is mixed reality, and also part of XR.

This is why Mixed Reality vs Extended Reality matters in production language. One term is strategic and inclusive. The other is technical and specific.

How the Production Pipeline Changes

Once teams move beyond terminology, the real difference shows up in pipeline design.

XR projects can range from stylized real time scenes to cinematic virtual spaces. Mixed reality projects, by contrast, are usually less forgiving. They ask assets to coexist with reality, which means inaccuracies stand out immediately.

A digital character built for MR often requires:

Cleaner silhouette logic at close range

More disciplined rig performance

Efficient texture budgets without visible compromise

Stable animation loops and readable gesture timing

Strong real time integration across device constraints

This is where the distinction between real time and offline production becomes important. A cinematic asset rendered offline can hide a great deal through controlled framing, lighting, and post work. A mixed reality character cannot rely on that protection. The audience may see it from below, from the side, in harsh ambient light, or at very close distance. That changes modeling, look development, shading, and optimization priorities.

Teams building deployment ready spatial experiences often need a pipeline that connects character creation with real time integration, ensuring that performance, visual quality, and interaction remain balanced from DCC tools into engine based delivery.

Hardware, Tracking, and Interaction Differences

The debate around Mixed Reality vs Extended Reality is often confused because many XR devices support multiple modes. A single headset may offer passthrough, full immersion, spatial anchoring, and hand tracking. That does not make all experiences on that device mixed reality.

What defines MR is not the headset itself. It is the behavior of the content.

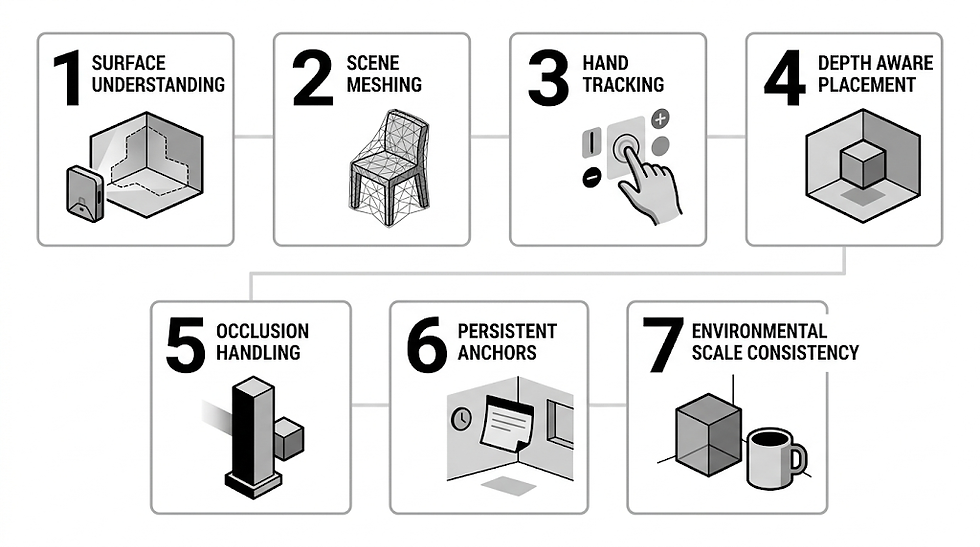

Mixed reality depends heavily on spatial alignment. That can include:

Surface understanding

Scene meshing

Hand tracking

Depth aware placement

Occlusion handling

Persistent anchors

Environmental scale consistency

Extended Reality, as a broader label, does not require any one of these. An XR deployment may be immersive and effective without interacting with the real world at all.

This distinction matters for budgeting. Mixed reality usually increases technical overhead in QA, interaction design, and environmental testing. It is not enough for the asset to look good in isolation. It must behave convincingly across variable physical contexts.

Where Each Approach Works Best

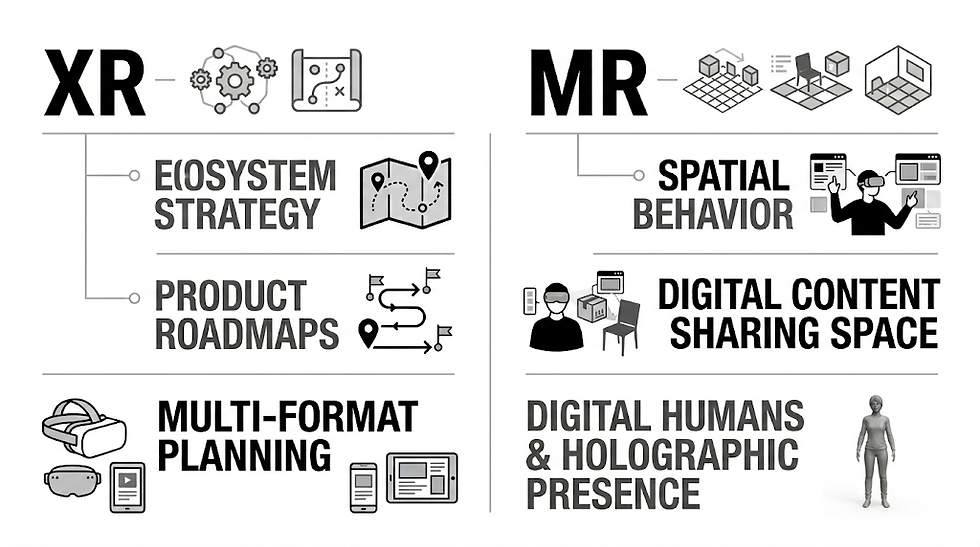

XR is the better language when you are talking about ecosystem level strategy, product roadmaps, or multi format content planning. It gives room to compare devices, delivery channels, and use cases without narrowing the solution too early.

MR is the better language when spatial behavior is central to the outcome. If the experience depends on digital content sharing space with people, objects, architecture, or live events, mixed reality is usually the more accurate description.

That is especially true for projects involving digital humans, holographic presence, or embodied AI. A character that simply appears on a flat display is not solving the same problem as one that inhabits a room, interacts with gesture input, or performs in a live activation. In those cases, services around AI avatars or volumetric presentation formats such as holograms can become part of the same spatial design conversation, even if the final deployment path differs.

Comparison Table

Aspect | Extended Reality | Mixed Reality |

Definition | Umbrella term for immersive technologies | Specific subset of XR |

Includes | VR, AR, MR, and related spatial systems | Blending of physical and digital space |

Relationship to real world | May replace, overlay, or ignore the physical environment | Must understand and respond to the physical environment |

Spatial awareness | Optional depending on format | Usually essential |

Interaction model | Varies widely | Often hand, gaze, controller, and environment aware |

Content demands | Broad range from simple to highly complex | Typically higher spatial and performance demands |

Best use | Strategy, classification, multi format planning | Precise deployment where real and virtual must coexist |

Character requirements | Depends on use case | Strong need for robust rigging, scale, and real time performance |

Applications

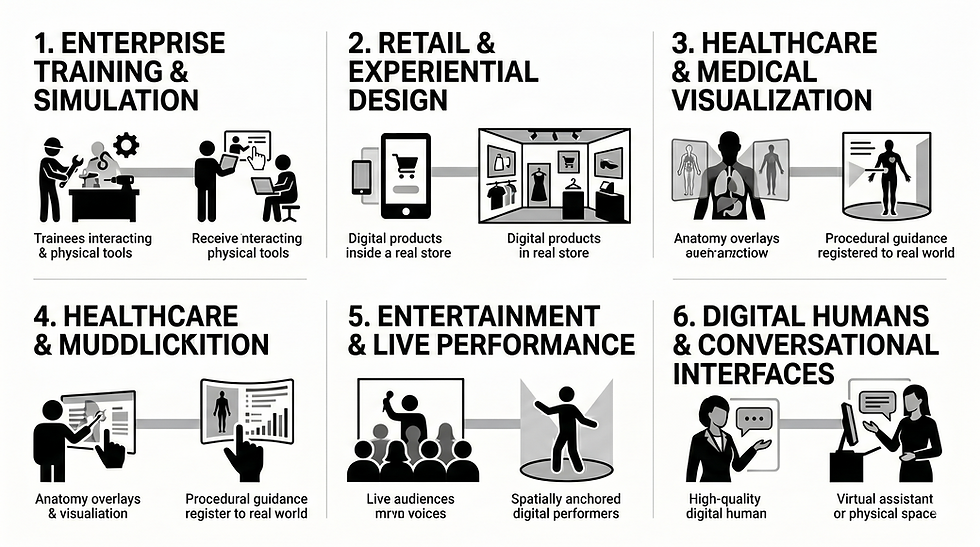

Enterprise Training and Simulation

XR is often used as the top level framework for training systems across VR, AR, and MR. Mixed reality becomes the preferred mode when trainees need to interact with physical tools, real equipment, or site specific layouts while receiving digital guidance.

Retail and Experiential Design

Extended Reality can cover virtual showrooms, product visualization, and immersive brand storytelling. Mixed reality is more relevant when digital products or characters must appear inside a real store, event space, or pop up environment with believable scale and presence.

Healthcare and Medical Visualization

XR is useful for broad medical simulation, remote education, and patient communication. MR is particularly valuable when anatomy overlays, procedural guidance, or room aware interfaces need to remain registered to the real world.

Entertainment and Live Performance

The language of XR suits broad entertainment planning across virtual concerts, headset content, and interactive installations. Mixed reality becomes the sharper term when live audiences encounter spatially anchored digital performers, responsive stage elements, or room aware storytelling.

Digital Humans and Conversational Interfaces

This is one of the clearest areas where Mixed Reality vs Extended Reality becomes operational rather than theoretical. A digital human inside an XR strategy could exist in VR, on mobile, or across multiple touchpoints. A mixed reality digital human must survive close inspection inside physical space, which raises the bar for scanning, topology, facial deformation, animation readability, and engine performance.

Benefits

Why Extended Reality Matters

It provides a clear umbrella for planning immersive ecosystems

It helps brands and studios compare formats without premature constraints

It supports long term content thinking across devices and channels

It is useful when one core asset base may feed multiple outputs

Why Mixed Reality Matters

It enables more context aware interaction

It creates stronger physical presence for digital content

It supports spatial storytelling, training, and collaboration

It can make digital humans and virtual objects feel materially present in a way that flat overlays cannot

In the Mixed Reality vs Extended Reality discussion, the real benefit of separating the terms is better decision making. Clear language leads to better scoping, better pipeline choices, and fewer mismatches between concept and delivery.

Future Outlook

As devices improve, the line between immersive modes will continue to blur from a consumer perspective. Passthrough systems, spatial computing platforms, and AI driven interfaces are already making experiences more fluid across categories. But from a production perspective, the distinction between XR and MR will remain useful.

Why? Because pipelines still need specificity.

A team building for mixed reality must still think about environment mapping, scene persistence, occlusion, comfort, asset density, animation clarity, and real time responsiveness in a way that broader XR planning does not always require. At the same time, more studios will treat XR as a connected ecosystem where characters, spaces, and interaction models move between headset experiences, mobile touchpoints, holographic displays, and live activations.

That convergence will make asset interoperability more important. Character systems will need to travel cleanly between real time engines, event deployments, and conversational interfaces. The studios best positioned for that future will be the ones that understand both cinematic asset quality and performance aware delivery.

FAQs

Is Mixed Reality the same as Extended Reality?

No. Extended Reality is the umbrella category. Mixed Reality is one specific type of immersive experience within that category.

Is Mixed Reality more advanced than XR?

Not exactly. XR is not a single technology to rank against MR. It is the broader field. Mixed reality is simply more specific and often more demanding in terms of spatial awareness and interaction design.

Does every AR experience count as Mixed Reality?

No. Some AR experiences are simple overlays without meaningful understanding of the physical environment. Mixed reality usually implies stronger spatial logic and interaction between digital and real elements.

Which is better for digital humans?

That depends on the use case. XR is useful when a digital human needs to operate across multiple platforms. Mixed reality is better when that character must occupy real space convincingly and respond to the surrounding environment.

Why do teams confuse the two terms?

Because device marketing often groups immersive experiences together, and many platforms support several modes at once. The language becomes clearer when you focus on how the digital content behaves rather than what the hardware is called.

When should a business use the phrase Mixed Reality vs Extended Reality?

Use it when the audience needs clarity on scope. It is especially useful in RFPs, production briefs, technical planning, and stakeholder education, where the wrong term can lead to the wrong expectations.

Conclusion

The difference between XR and MR is simple once you strip away the noise.

Extended Reality is the umbrella. Mixed Reality is the spatially aware subset where digital and physical elements truly coexist.

That distinction matters because immersive production is never just about terminology. It shapes interaction design, character preparation, engine integration, tracking demands, and deployment decisions. In other words, Mixed Reality vs Extended Reality is really a question about production intent. Are you defining a broad immersive ecosystem, or are you building an experience where digital content must behave convincingly inside the real world?

For studios working with digital humans, real time characters, and cinematic quality assets, that answer affects everything from scanning and rigging to animation and final delivery. The more precise the language, the stronger the outcome.

Contact us For further information and queries, please contact Press Department, Mimic Productions: info@mimicproductions.com

.png)

Comments